IOM: The Economics of Better Environmental Health

IOM: The Economics of Better Environmental Health

Over the past two decades, epidemiological studies have strengthened the link between air pollution and specific respiratory ailments, yielding better valuations for the pollution-related costs of illness and thus pinpointing the benefits of environmental regulations. Much work remains to be done, however, in linking air pollution to other important health outcomes, such as cancer, infant mortality, and even doctor visits. Nagging questions also remain about how best to translate health effects into economic values.

|

|

image: Ionescu Ilie Cristian/Shutterstock |

These were some of the questions addressed at the 14 November 2006 Roundtable on Environmental Health Sciences, Research, and Medicine, a project of the National Academies' Institute of Medicine cosponsored by the NIEHS along with several other public and private entities. Economists and public health analysts outlined developing methodologies to identify and quantify the health benefits of reduced air pollution and to pinpoint costs to industry of complying with air quality regulations.

"Overall, estimating risk from air pollution is becoming more precise as the pathway from air pollution to health is better characterized," said C. Arden Pope, a Brigham Young University economist, at the roundtable. Monitoring large groups of people for long periods has enabled researchers to better control for confounding factors such as age, sex, and cigarette smoking, said Pope. More interdisciplinary work is needed to expand the scope of health benefits that can result from reduced pollution as well as further pinpoint measurable compliance costs of regulation.

Calculating Costs

Health benefits are integrated into regulatory decision making at the end of a complex modeling structure that begins with simulations of air emissions reductions likely to result from a particular regulatory strategy. Other models determine likely changes in human exposure to pollution and probable improvements to public health resulting from the strategy. Improvements to public health—reductions in incidences of disease or mortality—are assigned monetary values so that benefits of regulation can be measured against the costs of implementing and complying with them.

Monetization is controversial largely because of the need to place a value on premature death, and the sheer logistics of the task—simply calculating the number of doctors' visits for respiratory ailments, for example—can be daunting. Health benefits are calculated either by estimating direct costs associated with avoided illnesses or by assessing the public's willingness to pay for avoided illnesses.

"Cost of illness" calculations are generally based on hospital admissions and work days lost, which capture direct dollar savings—health care costs avoided by better air quality—but ignore the price of pain and suffering. "Willingness to pay" calculations, based on consumers' stated or revealed preferences, generally yield less certain results but give a broader picture of total benefits. These calculations give values for premature death, chronic bronchitis, and various respiratory symptoms such as asthma.

Although the cost of premature death traditionally is calculated with the "willingness to pay" method, some international organizations, such as the WHO, instead use a "disability-adjusted life years" or "life years lost" methodology. Within the United States, the use of "life years lost" remains a politically charged debate, said Daniel Greenbaum, president of the Boston-based Health Effects Institute; although "life years lost" calculations may better reflect the impact of air quality regulations, these calculations also routinely lower the estimated benefits of regulation and are criticized as devaluing elderly citizens. This methodology also requires more costly and time-consuming analysis of epidemiological data and a more precise understanding of how the timing of exposure influences health effects.

The EPA's benefit–cost analyses for regulatory purposes come under strong scrutiny in part because the agency's assessments of the benefits of EPA regulations exceed those of all other major federal regulations, according to Progress in Regulatory Reform, a 2004 report by the Office of Management and Budget. The study calculated the total annual benefits of federal rules from 1993 through 2003 at $63.3–169.3 billion (in 2001 dollars). Of that total, EPA regulations contributed $37.6–131.7 billion in benefits.

The EPA uses costs of lives lost and health effects avoided by reduced pollution in its benefit–cost analyses, Greenbaum said, such as those conducted periodically on the implementation of the Clean Air Act. The EPA has published two Benefits and Costs of the Clean Air Act reports to date on this topic. One was a 1970–1990 retrospective study and the other a 1990–2010 prospective study. The latter estimated the value of premature deaths avoided at about $100 billion. Jim DeMocker, senior policy analyst in the EPA Office of Air and Radiation, said the health benefits quantified within this prospective study ranged from $26 billion to $270 billion, depending on the method used to calculate reduced mortality and other benefits.

The EPA is now undertaking a second prospective study of the effects of implementation of the Clean Air Act covering the period 1990–2020, and agency economists are struggling to find the right method for valuing premature death and other health effects.The EPA's Advisory Council on Clean Air Compliance Analysis has recommended that for this second prospective study, EPA economists revise mortality risk valuation estimates, and estimate exposure and effects of air toxics.

Toward Better Estimates

Mortality risk valuations are only one set of challenges to benefit–cost analysis. Consensus has not emerged on defining a clear-cut set of health benefits from reduced air pollution or in quantifying the lag time between reductions in exposure to pollution and the realization of health improvements among affected populations. Political controversy continues, as well, on the EPA's methodologies to determine industry's costs of regulatory compliance.

Richard D. Morgenstern, senior fellow at the Washington, DC–based Resources for the Future, said extensive surveys of the literature on the EPA's benefit–cost calculations have shown that both the costs of regulation as well as the potential emission reductions from regulation are overstated, in part because of the difficulties in establishing precise compliance cost estimates before a regulation is implemented. There is less clarity about the accuracy of the EPA's forecasts on environmental impacts, including health impacts, he said.

Morgenstern identified several cost and benefit factors that are difficult to estimate accurately. One is the impacts associated with technical changes that reduce regulatory compliance costs. "We underestimate technical change," he said, noting that often "the benefit–cost analysis must reflect the use of certain [proven pollution control] technologies." In fact, industry often can respond to regulation with more efficient, less expensive technologies that are not easily incorporated into EPA cost calculations. Morgenstern cited a study published in the Spring 2000 issue of the Journal of Policy Analysis and Management, which found that, in each of seven cases examined, the EPA's regulatory impact assessments overestimated the cost of using economic incentives such as emissions trading.

Costs and benefits can be affected as well by changes to prospective rules after benefit–cost calculations are complete, or by incomplete implementation of regulations. Uncertainty associated with calculating baseline air pollution and health conditions before regulations are imposed will also affect how well we can estimate the benefits of new rules.

New frontiers are developing on the scope of health effects linked to air pollution.To date, benefit–cost analyses of air regulations routinely take into account the link between particulate matter pollution and mortality, chronic bronchitis, hospital admissions, asthma-related emergency room visits, acute respiratory symptoms, and asthma attacks. But many other potentially important health effects are not fully quantified or considered, says Greenbaum. Among these are cancer, ozone mortality, infant mortality, decreased lung development in children, doctor visits, and new incidences of asthma. Greenbaum said these health effects are not quantified because researchers lack appropriate baseline incidence rates, because epidemiologists lack enough evidence to link these effects to air pollution, and because the effects are not easily monetized.

As scientists grapple with the uncertainty of estimating and quantifying health benefits of environmental regulation, they are best served by conducting sensitivity analyses of the estimates that they do have, and by stressing post-implementation assessments of benefits and costs, Greenbaum concluded.

Both Greenbaum and Pope also pointed to the need for research that will yield better health care cost estimates. As one example, Greenbaum noted that over time, the prevalence of asthma rises, while treatments for the condition continue to improve—trends that are not captured in benefit–cost analyses of air regulations.

Jan Gilbreath

TRC: Mission Accomplished

Toxicogenomics is no longer in its infancy. The field is poised to make significant contributions to risk assessment, drug screening and development, clinical diagnosis and therapy, and policy decision making. That was the general consensus that emerged from the final meeting of the Toxicogenomics Research Consortium (TRC), a multicenter collaborative initiative established by the NIEHS in 2001 to serve as an extramural arm of the institute's National Center for Toxicogenomics (NCT).

The conference, "Empowering Environmental Health Sciences Research with New Technologies," was held 4–6 December 2006 in Chapel Hill, North Carolina, and was sponsored by the NIEHS, the University of North Carolina at Chapel Hill (UNC-CH) Center for Environmental Health and Susceptibility, the UNC-CH Lineberger Comprehensive Cancer Center, and Agilent Technologies, which manufactures microarrays along with other measurement tools. The meeting included presentations from grantees in two other recent efforts to support the development of applications of the "omics" technologies: the NIEHS Functional Proteomics Initiative, which was established in 2002, and the NIEHS/NIAAA Metabolomics Initiative, a consortium started in 2005 by the NIEHS and the National Institute on Alcohol Abuse and Alcoholism.

The conference began with a symposium recognizing the contributions to the field of toxicogenomics by the NCT and its director, Raymond Tennant. Tennant championed what has proven to be a vital concept in toxicogenomics: "phenotypic anchoring," or the idea that the results of microarray experiments must be associated with particular phenotypes to be of informative value.

Richard Paules, a senior scientist in the NIEHS Laboratory of Molecular Toxicology, elaborates: "In order to understand the plethora of gene expression changes, where you've got five or ten thousand changes in a particular response [or] disease state, . . . it's important to make a correlation, a link between a particular biological event and a group of gene expression changes. The linkage can then be tested, and subsequent experiments conducted in a hypothesis-driven manner."

A Refined Tool

The TRC was designed to last for five years, but is now in a sixth additional year with limited funding to wrap up outstanding projects. During its lifetime, the TRC has made tremendous strides, providing an infrastructure to validate the technology. "From the standpoint of where we started, when toxicogenomics was just a really neat tool, we've moved the tool forward," says William Suk, director of the Center for Risk and Integrated Studies in the Division of Extramural Research and Training (CRIS/DERT) at the NIEHS. "It's a tool that can now be used to address some very specific hypotheses . . . with regard to chemical structure and function."

Toxicogenomics can now be considered a viable tool because for all intents and purposes the TRC, through a series of consortiumwide experiments, has accomplished one of its primary missions—standardization of microarray platforms and experimental methodologies. "We have achieved a high level of standardization in the technology," says Tennant. "You can now use any of the commercial [microarray] platforms with confidence that you're going to get a reliable, reproducible answer."

One of the major achievements in the standardization process has been the development of statistical tools designed to control for inevitable variation in experimental conditions and methods. "We're reaching a point where we understand where that variability is coming from and how to minimize it and control for it," says conference co-organizer David Balshaw, a CRIS/DERT program administrator.

Ivan Rusyn, an assistant professor in the Department of Environmental Sciences and Engineering at UNC-CH, and a TRC grantee, agrees that standardization is a crucial step forward for toxicogenomics. "The task of standardization seems mundane and boring and not scientifically challenging," he says, "but as toxicology is really applied science, we not only think of these new frontiers in science, but also how you can produce science that is [credible] to the public and to the regulator and can be used in some very practical applications."

Are the "Omics" Ready for Prime Time?

Meeting participants were cautiously optimistic that toxicogenomics, proteomics, and metabolomics are suitably advanced to begin to find application in policy decision making, in clinical medicine, and in broadening understanding of how gene–environment interactions can lead to human disease. According to Balshaw, we are entering "the age of systems biology," with a growing ability to integrate data at different levels of organization and increasing levels of complexity.

"You can look at all twenty-one thousand genes simultaneously," he says. "You can look at how the products of those genes—the proteins—are modified dynamically through phosphorylation and ubiquitination, and you can look at the products of those reactions—the small molecules in metabolomics. You can then begin to look at the integration of data at those three levels of biological organization to truly understand the mechanisms of environmentally induced disease."

Tennant is confident that most of the predicted applications of toxicogenomics and the other technologies will come about, but cautions against unrealistic expectations of how long it will take. "I would say that plausibly within ten years, [toxicogenomics] could well be available in the clinic, as very targeted arrays and targeted platforms," he says.

A test array for acetaminophen exposure, just one of several potentially toxic exposures studied by TRC scientists, may be one of the first applications to emerge from consortium experiments. "Our goal is to provide clinicians with a better tool to interpret liver injury with acetaminophen exposure, and a basis for making decisions on how to respond to overdose patients, many of whom come in borderline comatose," says Paules. According to the American Association of Poison Control Centers, in 2003 more than 65,000 patients were seen in health care facilities for potential acetaminophen poisoning, and 327 died. "If we had a signature in the blood that would give insight as to whether an individual has been exposed to a severely toxic level and has suffered a severely toxic injury to the liver, it could help the clinician treat that patient," Paules explains.

Genomics technologies are already having an impact in the pharmaceutical world, as evidenced by presentations at the meeting from Cynthia Afshari of Amgen and Weida Tong of the FDA. Amgen, a biotherapeutics company, is using microarrays to screen candidate drug compounds for toxicity in vitro. The FDA recently issued guidelines to the drug industry to facilitate submission of genomic data, and is conducting its own standardization efforts, including an initiative called Microarray Quality Control.

Onward

Although the TRC has run its course, there is still ample room for further development of the technologies, and there is still a great deal of knowledge to be gained from their implementation and continued refinement.

For example, TRC members at the Massachusetts Institute of Technology (MIT) have invented a rodent liver on a chip, known as a liver microbioreactor. It's a three-dimensional physiological model of a rodent liver with all of the different liver cell types present, and it is responsive in the same way the whole organ in a whole animal would be. The device will allow testing of liver toxicity, which often involves multiple doses and multiple time points, to be conducted in a highly controlled high-throughput fashion. "It also lets you have a little bit cleaner system than the whole [animal], to test hypotheses about why things might be happening by adding and subtracting different cell types," says Linda Griffith, a professor of biological and mechanical engineering at MIT.

The technology has emerged from the field of tissue engineering, where the focus is on growing replacement body parts. But according to Griffith, "there's more of a push now to build replicas of human tissue to capture physiology in culture so that you can ultimately do high-throughput assays in human tissues—essentially to build a human body on a chip, to do predictive studies on how humans would respond."

The next voyage of discovery for the field may well be yet another entry in the "omics" lexicon: epitoxicogenomics, or the application of toxicogenomics techniques to characterize DNA methylation patterns throughout the genome. "We see the product of methylation changes when we look at what genes are being turned on and turned off, [but] we often don't know why they're turned on and turned off," explains Tennant. "If you can layer the methylation patterns on top of the expression patterns, the whole process of gene transcription will become much more understandable, and probably very predictable."

The field will face other steep challenges in the years to come, such as developing the technologies and methodologies, particularly the computational and biostatistical tools, to characterize the effects of low-dose exposures and exposures to mixtures of chemicals. Either condition can increase by many times the difficulty of interpreting whole-genome assay results.

Several conference participants believe that incremental progress will be made in those areas as new, more sophisticated bioinformatics applications are formulated.On the issue of mixtures, Suk says, "The biostatistics and the informatics and the mathematical algorithms are there today to say, 'this chemical does this,' . . . [but] what we don't have are the mathematical algorithms to help interpret these data."

Ernie Hood

Beyond the Bench

Clean Sweep

Adopting Safer Urban Demolition Practices

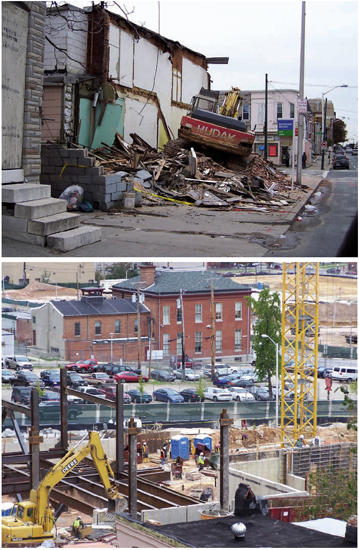

In cities undergoing urban revitalization, progress can often be a costly benefit, particularly for residents living right in the midst of the changes. As staff at the Johns Hopkins NIEHS Center in Urban Environmental Health have documented, without proper standards in place, by-products from urban renewal projects can be not only inconvenient, but also a threat to the health and safety of community members, particularly those living in low-income neighborhoods, where renewal projects tend to occur most often.

|

|

Tearing down buildings without tearing down health. Concerns about environmental hazards of demolition, such as debris left on sidewalks (top), were documented by the Environmental Justice Partnership Community Board, resulting in a set of safer practices that have been used in projects such as those by East Baltimore Development, Inc. (bottom).

image: Barbara Bates-Hopkins/Johns Hopkins NIEHS Center in Urban Environmental Health |

Since the 1970s, ongoing renewal efforts in Baltimore have improved the workplace, living, and leisure choices for city residents. But the measures used to revamp the city have also introduced environmental threats including dust, waste water, large amounts of uncontained debris, noise, vibration, and rats and other pests fleeing demolished buildings. In addition, residents have voiced concerns about the possible presence of lead in the dust and debris of demolished housing. So in 2000 the center began working with community members to assess the environmental health issues.

Under the direction of faculty member Mark Farfel, center staff collected vacuum sidewalk samples before, during, and after demolition took place in neighborhoods to measure lead content. Their findings, described in the July 2003 issue of EHP and the October 2005 issue of Environmental Research, confirmed the fears of residents: more lead was present during and after demolition than before. According to Patricia J. Tracey, the center's community relations coordinator, the contractors hired by the city in an initiative to remove dilapidated properties have not appeared to use any defined safety measures to protect residents. "The current practices of urban demolition [in Baltimore] can be viewed as an environmental injustice to the residents who have to live with such projects and practices," says Tracey.

These troubling findings led to the formation of the Environmental Justice Partnership in 2003, a collaboration between the center and other concerned East Baltimore community organizations, including the Maryland Institute College of Art, the Environmental Justice Partnership Community Board (comprising representatives from 10 community organizations), and staff and faculty from the Johns Hopkins Bloomberg School of Public Health.

The partnership joined with Baltimore city agencies, community organizations, residents, and public health experts in developing a new, safer demolition prototype, which includes a set of quality assurance measures to be implemented before, during, and after demolition to protect the health of residents. These measures include removing lead-containing materials from houses before they are demolished; giving residents and city agencies proper notice before engaging in building demolition; controlling dust emissions by using established wetting practices; properly containing and promptly removing debris; cleaning and repairing streets and sidewalks; and redeveloping vacant lots. The center is having ongoing meetings with community members to get feedback on the measures, and will incorporate the feedback into future development activities.

Tracey says the city is working with the community partnership to incorporate the measures into future demolition projects. East Baltimore Development, Inc., a nonprofit organization created by Baltimore's mayor and city council to manage the revitalization of an 80-acre development of Middle East Baltimore, plans to use the safer demolition prototype in all phases of the project. Center staff have also met with Madeleine A. Shea, the new Baltimore city assistant commissioner for healthy homes, to see about getting the measures incorporated into standard city practice.

Michael A. Trush, the center's deputy director, says the Baltimore prototype can be adapted for use in other cities, with considerable benefits. "Urban demolition is a major concern throughout low-income communities, not only in Baltimore, but in other cities in the United States," he says. "We envision that the lessons learned and the policies developed from this project can be translated to other sites."

Tanya Tillett

Headliners: Metal Toxicity

NIEHS-Supported Research

image: geopaul/iStockphoto |

Lead Exposure May Affect Language Ability

Yuan W, Holland SK, Cecil KM, Dietrich KN, Wessel SD, Altaye M, et al. 2006. The impact of early childhood lead exposure on brain organization: a functional magnetic resonance imaging study of language function. Pediatrics 118:971–977.

Lead exposure is known to cause behavioral problems and learning deficits in children that persist into adulthood. Delays and/or deficits in intellectual ability, academic achievement, and psychomotor development have all been associated with childhood lead exposure. Localizing functional changes in the brain has been limited, however. Now NIEHS grantee Bruce Lanphear of the Cincinnati Children's Environmental Health Center and colleagues report evidence of reorganization of the language centers of the brains of young adults with a history of childhood lead exposure, suggesting that lead exposure affects language ability.

The investigators recruited project participants from the Cincinnati Lead Study, an ongoing epidemiologic investigation into the long-term effects of childhood lead exposure that recruited pregnant women from 1979 to 1984. The subjects have been followed from birth and have had extensive documentation of lead exposure, medical history, neuromotor function, and academic achievement. Forty-two young adults participated in the current study on language ability. Their average childhood blood lead level was 14.2 µg/dL.

The Cincinnati team conducted functional magnetic resonance imaging on the subjects while they performed a "verb generation task." Subjects were instructed to silently think of verbs in response to a noun. For example, if the noun "ball" was presented, the subject might think of verbs such as "throw," "kick," or "hit." Accounting for significant potential confounders, the researchers conducted multivariable regression analysis to test the significance between brain language activation and mean childhood blood lead levels.

Higher mean childhood blood lead levels were associated with significant diminished activity in regions of the left hemisphere of the brain known to be responsible for language ability, along with compensation in regions in the right hemisphere. The authors note, however, that the compensatory alternative pathway does not necessarily yield performance equivalent to that achieved through normal brain pathway function. Similar adaptations have been documented in response to tumors, epilepsy, and stroke, though the effects seen in this study were not as severe as those observed with stroke. Jerry Phelps |