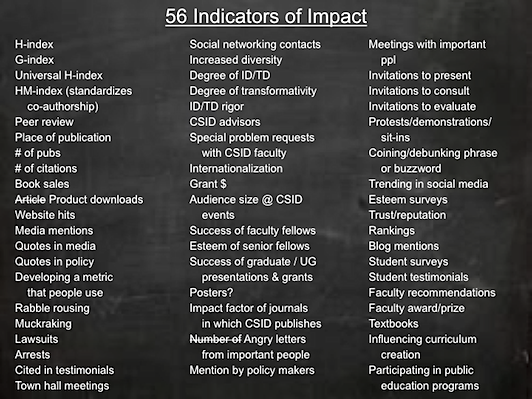

In 2011, several core members of the Center for the Study of Interdisciplinarity (CSID) at the University of North Texas held a meeting during which we imagined different ways to indicate the impact of our activities. We scribbled them on the blackboard as they occurred, and the table above is a faithful rendering of what we wrote down – complete with abbreviations. The activity was meant to be fun, as well as serious. We certainly did not imagine at the time that anyone else would be seriously interested in this list. However, when we present at conferences and include this table as one of our slides, many audience members ask where it is published. The answer, it appears, is here – and there is a modified version of the same table published as a correspondence in Nature.

Included in the list are not only quantitative indicators of scholarly impact (such as the H-index), but also qualitative indicators that we might be having some impact on the world (such as meetings with, or even angry letters from, important people). We think, especially in an age of increasing demands for accountability, that we academics ought to own impact, rather than having it determined by someone else.

The list was never meant to be authoritative. Generating it was meant, originally, to spur our own imaginations about the various ways we might document the impacts of our research. We publish it now in an effort to spur your imaginations to tackle the same task for yourselves. We would love to see comments that suggest other indicators of impact, as well as comments on our own suggestions.

Abbreviations:

ID/TD, Interdisciplinary/transdisciplinary, or sometimes interdisciplinarity/transdisciplinarity

UG, Undergraduate

ppl, people

CSID staff who contributed to authoring the list:

J. Britt Holbrook, Assistant Director (0000-0002-5804-0692)

Kelli Barr, Graduate Research Assistant (0000-0001-7048-4977)

Keith Wayne Brown, Programs Manager

CSID staff whose activities contributed to thinking about the list:

Robert Frodeman, Director

Adam Briggle, Faculty Fellow

Pingback: We need negative metrics, too / Nature | jbrittholbrook

The impact of research should be skewed toward who is paying for it. If a university funds faculty to do research, the university should be impacted by that research. If the public funds research, the public should be impacted by that research.

I somewhat disagree re: impact should go to who funds the research. Let’s think about who funded some of the most profound scientific discoveries of the late 19th and early 20th century?

Pingback: Research impact: We need negative metrics too | Reason & Existenz

Pingback: Negative Results – Not Just For Journal Articles? | Pasco Phronesis

Pingback: On the present problems of publications, and possibly the coming futue? Some Labyrinthine musings. | Åse Fixes Science

Pingback: Open Access and Its Enemies | jbrittholbrook

Pingback: Breaking Three Cords | Research Enterprise

Pingback: 56 Indicators of Impact | Research-Management In Management-Research [RMIMR]

Pingback: Altmetrics: achieving and measuring success in communicating research in the digital age | Hazel Hall

Much of what you list is not impact, it’s reach. Impact makes some kind of measurable/documentable difference to individuals, organisations and society at large. Reach extends your potential influence beyond academia but may not always result in impact. These are important distinctions.