|

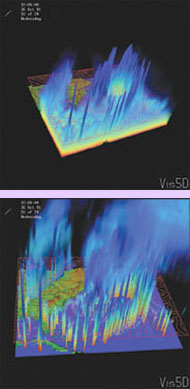

Two views of a 20-km-resolution simulation of the “Perfect Storm.” The figure on the top depicts atmospheric moisture. The figure on the bottom shows rainfall and cloud water. |

|

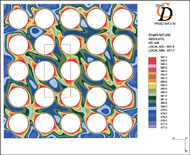

This computer image shows the core of a pressurized water reactor. The colors illustrate temperature. The fuel pins are shown as white circles. |

|

These images, from research by nuclear physicists Steve Pieper and Bob Wiringa, represent different angular momentum states of the deuteron, a two-body hydrogen nucleus comprising one proton and one neutron. Similar structures for nucleon pairs are found in heavier nuclei. |

Parallel computers ‘evolutionize’ research |

|

A major research trend is harnessing advanced computers to complement theory and experiment. Advanced computing allows scientists to conduct experiments that could not otherwise be done, to test possible experiments before investing the time and money to physically carry them out, and to create models of complex phenomena. Fueling the growth of scientific computing are the rapid expansion and availability of parallel computing facilities, such as Argonne’s Chiba City, a 512-processor parallel computer based on the Linux operating system. A typical desktop computer has only one central processing unit. A parallel computer, however, has many processors that coordinate to work on smaller parts of a larger problem. Modern technology can even link computers around the world, providing still greater power to solve complex problems (see Globus Toolkit enables Grid computing). Argonne computer scientist Mike Minkoff sees scientific computing as a natural evolution of science. “Research,” he said, “combines three activities: experimental observation; the development of mathematical models, such as Newton’s laws of motion, to describe the observations; and computation to test the models by applying them to new experimental observations.” In the past 20 years, the computer has given experimentalists more direct feedback, partly by speeding computation and lowering the costs of solving larger problems and partly by enabling researchers to develop and test models quickly. Modeling

the “Perfect Storm” “Global climate models don’t do a good job of predicting regional climate,” Taylor said, “because the smallest area they examine is typically a square that measures 200 by 200 kilometers. A grid cell that large can’t reveal extreme weather, which tends to be local in scale.” Taylor’s models use 1- to 10-km cells. “At this level of detail,” he said, “the model can include specific regional features—mountains, valleys, bodies of water—that shape local winds and precipitation. You begin to see extreme events, such as more intense rainfall and wind storms.” Taylor’s group has developed a model of the “Perfect Storm,” which hit the north Atlantic in October 1991 and subsequently inspired a best-selling novel and a Hollywood movie. At 20-km resolution, the model revealed a second hurricane that weather services never reported. Because computing time rises sharply as resolution increases, regional climate modeling requires considerable computing power. To meet this requirement, Taylor’s group uses Argonne’s Chiba City. “The calculations scale well,” he said, “and we can access a large number of processors to perform the runs cost effectively. Argonne is one of the few places in the world with a large-scale cluster testbed available for this kind of research.” His research is funded by DOE’s Office of Biological and Environmental Research and the U.S. Environmental Protection Agency. Mapping

reactor behavior The project uses advanced computing tools to predict fuel and coolant temperatures throughout the core during normal and abnormal conditions. Because of the expense of operating massively parallel computers and the large size of the model, these tools will be used to improve and verify smaller, more economical models for routine use. “This project is using massively parallel computers to look at the complex feedback relationships between reactor fuel and coolant in an integrated reactor system,” said Weber, Reactor Analysis and Engineering Division director. Nuclear reactors use fission to produce heat. The heat boils water to produce steam, and the steam turns a turbine, which generates electricity. Heat generation in the core depends on two phenomena:

Higher neutron flux can raise fuel temperature; but higher fuel temperature can reduce neutron cross section, which lowers the fission rate and may reduce fuel temperature. “Feedback is balanced when the reactor operates normally at constant power,” Weber said, “because heat production and heat removal are equal. But when something changes—an operator adjusts the power or some short-term incident occurs—the system’s response depends on feedback and operator actions.” The Argonne-KAERI-Purdue collaboration is building on previous Argonne work, which churned through a 240-million-cell model of a reactor core in 58 hours on a 200-processor parallel computer at IBM’s SP Benchmark and Enablement Center, Poughkeepsie, N.Y. “The earlier work,” he said, “looked only at the temperature of the coolant as it flows through the core. This project incorporates details of neutron flux and fuel temperatures that we couldn’t attempt without massively parallel computers.” Additional details include turbulent mixing when the water flow encounters components in the core. The mixing is beneficial, Weber said, because it creates more homogeneous coolant temperature and enhances heat transfer, but the turbulence makes the pumps work harder. “Our work may help redesign components to promote mixing while reducing pumping losses.” (continue to page 2) |